Now that we’ve got a working little ASCII compressor it’s time to talk about how “real” compression algorithms work.

Now honestly I should probably just drop a couple wiki links to Huffman_Coding and DEFLATE but presumably you’re here on my blog because you specifically want to hear me talk about computer science so here we go!

Let’s start by refreshing our memories on how we approached the problem of compression.

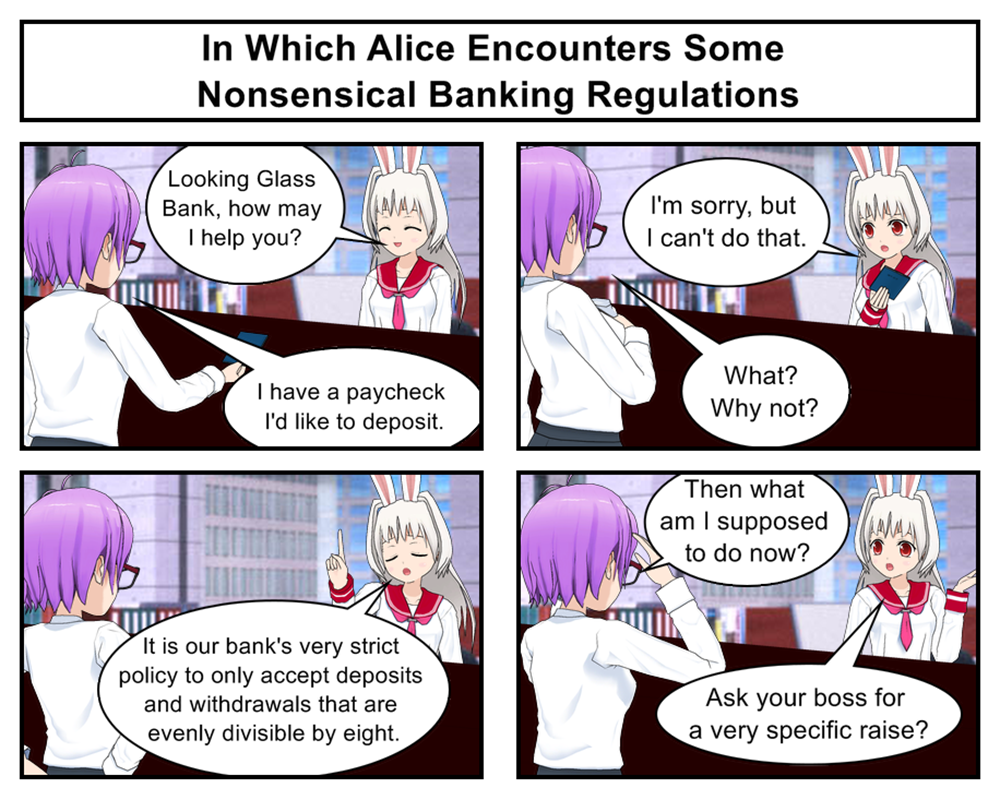

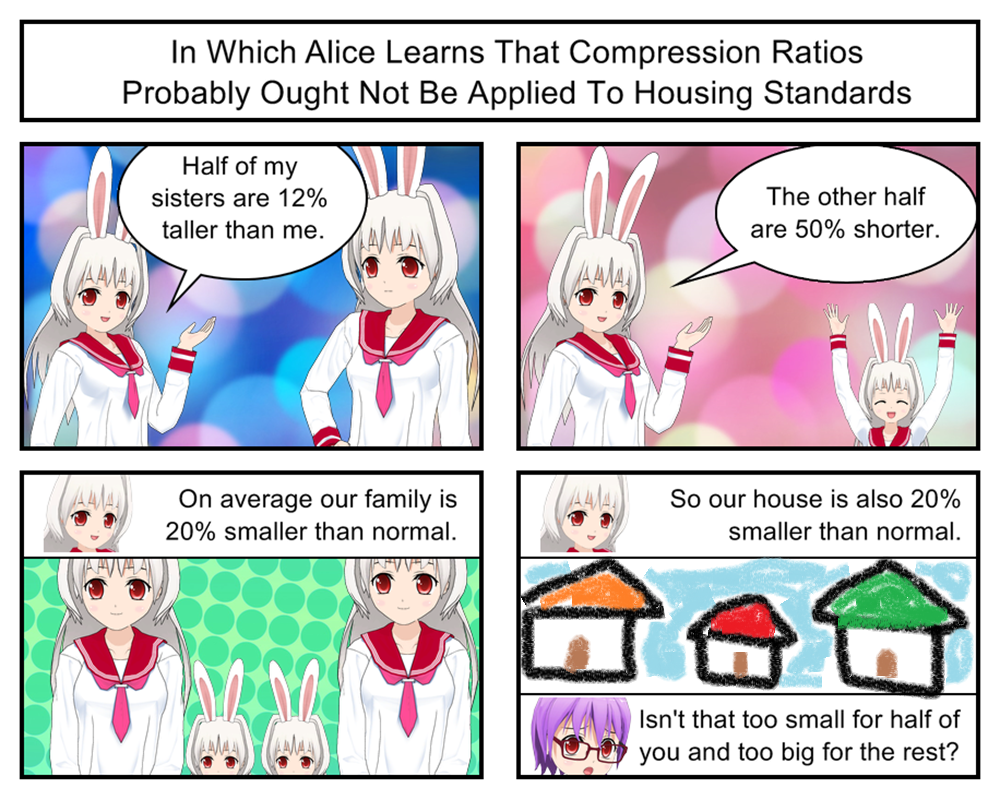

We started by discovering that normal ASCII text assigns a full 8-bits to every possible letter. We then did some research and found that some ASCII letters are much more common than others. That let us invent a new standard that squished common letters into 4-bits while expanding less common letters into 9-bits. This overall resulted in files that were 20-30% smaller than their plan ASCII equivalents.

So how would a professional approach the same problem?

Believe it or not they would do more or less the same thing. Professional tools achieve their impressive 80%+ compression standards using the same basic approach we did: Find unusually common data patterns and then replace them with much smaller compressed place holders. The professional tools are just much much smarter about how they find and compress these patterns than we were.

How much smarter? Let’s find out by comparing and contrasting what we did against what better compression tools do.

Our OK Idea: We designed our compression patterns based around our knowledge of average letter frequencies in the English language. While this worked OK there was the problem that not every text file follows the average English pattern. A report about zigzagging zebras is going to have a ton of z’s and g’s while a C++ code file is going to have lots of brackets and semi-colons. Our average English compression strategy won’t work very well on files like that.

Their Better Idea: Professional tools don’t use a single universal encoding scheme but instead create a unique encoding for every file based on their individual symbol frequency. So if a particular text file has a ton of “z”s or parenthesis or brackets it will get its own custom code that compresses those letters. This lack of a universal standard does mean that each compressed file has to start with a dictionary explaining the specific compression pattern used for that file but the space saved by using an optimized compression pattern for each file more than makes up for the space taken up by the dictionary.

Our OK Idea: We not only used the same compression codes for every single file, we used the same compression codes throughout every file. This was easy to program but could be sub-optimal on longer files. If a book starts out talking about zigzagging zebras but later focuses on electric eels then one half of the book might compress better than the other.

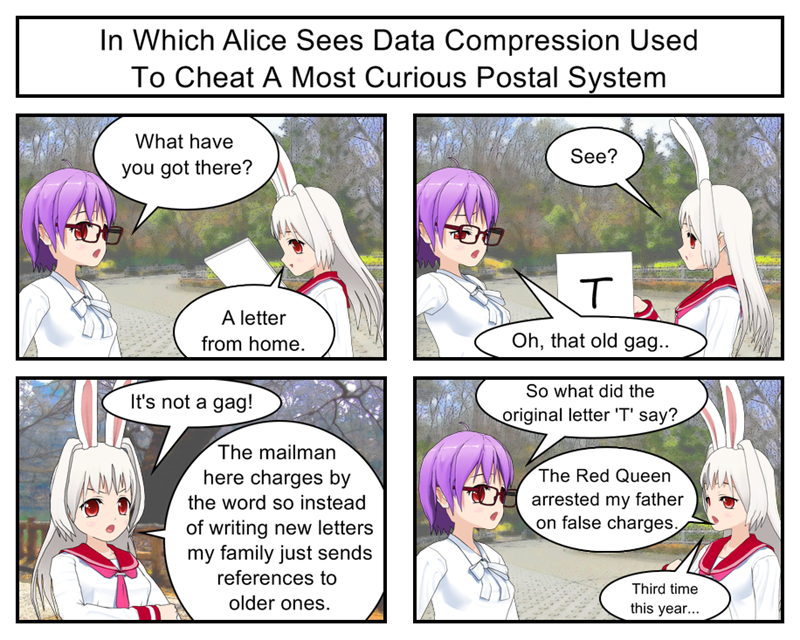

Their Better Idea: Many compressional algorithms split files into multiple segments and then compress them individually before gluing them together into an output file. This way each segment can have its own optimized compression pattern. So if one half of a file had a lot of “z”s but no “l”s it could get a compression pattern focused on “z”s. If the second half of the file switched things around and had lots of “l”s but almost no “z”s the algorithm could then switch to a new compression pattern that shrunk down the “l”s and did nothing with “z”s. Of course this means each compressed file has to have multiple dictionaries explaining the specific compression pattern and length of each file segment but once again the space you save in better compression outweighs the space you lose from the extra dictionaries.

Our OK Idea: Our compression code focused only on ASCII text files. This made it easy to design and test our project but also severely limits it’s utility.

Their Better Idea: Admittedly some professional tools also only focus on one file type. For example, PNG only compresses image files. But many other professional compression tools work by looking for repeated bit patterns of any sort and length. This lets them compress any file made of bits which is, well, all of them. Sure, not every file compresses particularly well but at least there are no files you plain can’t run the algorithm on.

Our OK Idea: Our compression code worked by replacing common 8-bit letter patterns with smaller 4-bit patterns. This means that even in a best case scenario (a file containing only our eight compressible common letters) we could only shrink a file down to 50%.

Their Better Idea: Professional tools don’t restrict themselves to only looking for common 8-bit patterns but can instead find repeated patterns of any length. This can lead to massive compression by finding things like commonly used 256-bit patterns that can be replaced with two or three bit placeholders for 99% compression. Of course not every file is going to have conveniently repeated large bit sequences but being able to recognize these opportunities when they do happen opens up a lot of possibilities.

So there you have it. The main difference between professional compression algorithms and our toy is that professional programs spend a lot more time upfront analyzing their target files in order to figure out an ideal compression strategy for that specific file. This obviously is going to lead to better results than our naive “one size fits all” approach.

But figuring out the ideal compression strategy for a specific file or type of file is often easier said than done! To go much deeper into that we’d have to start seriously digging into information theory and various bits of complex math and that’s way beyond the scope of this blog. But if this little project piqued your interest I’d definitely encourage you to take some time and study up on it a bit yourself.

BONUS PROJECT FOR ENTERPRISING PROGRAMMERS

Hopefully this article has given you a few ideas on how our little toy compressor could be improved. And while you probably don’t want to try anything as drastic as implementing a full Huffman style algorithm I think there are a lot of relatively simple improvements you could experiment with.

For example, what if you got rid the hard coded set of “English’s eight most common symbols” and instead had your algorithm begin compression by doing a simple ASCII letter count to figure out the eight most common symbols for your specific target file? You could then have your algorithm assign compression short codes to those eight symbols which should probably lead to results at least a few percentage points smaller than our one size fits all prototypes

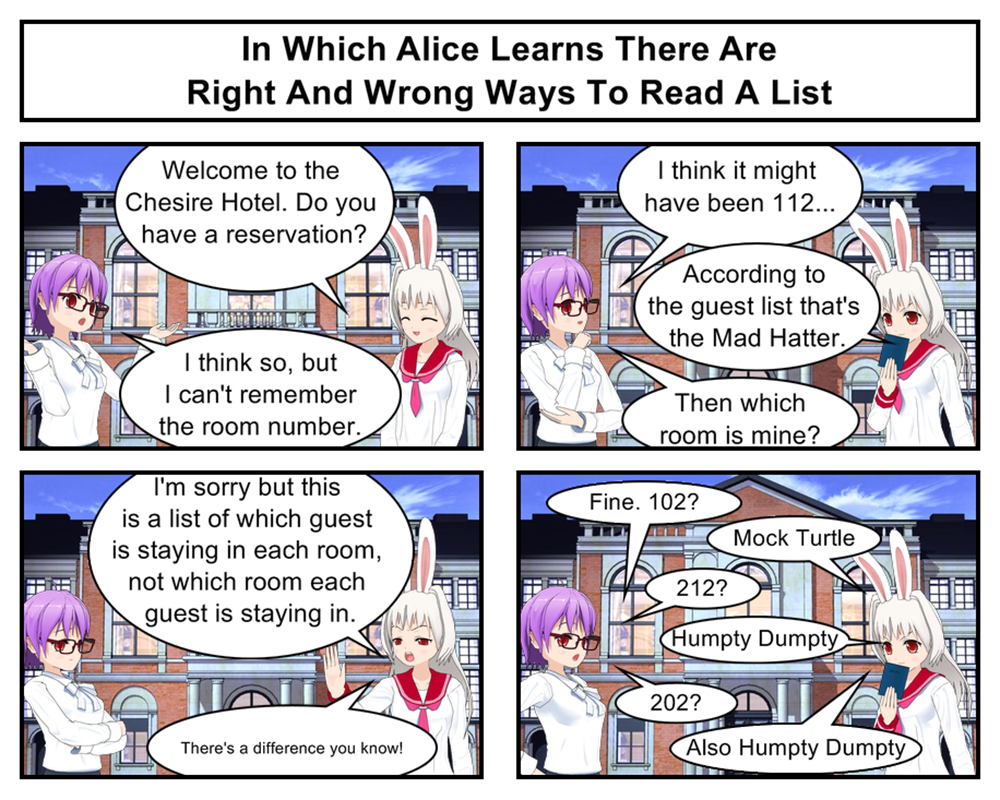

Of course, in order to decompress these files you’ll have to start your file with a list of which short codes map to which letters. You could do this with some sort of pair syntax (ex: {0000:z,0001:l,0010:p}) or maybe just have the first eight bytes in a compressed file always be the eight symbols from your compression map.

And with that we’re pretty much done talking about compression, but speaking of improvements reminds me that my Lisp code is still slow as cold tar. So if you have any interest in Lisp at all I’d like to encourage you to tune in again next week as we analyze and speed up our code and maybe even talk a little about that “functional programming”.